Before the invention of photography, the only way to share museum collections with people across the globe was by either drawing or painting a picture of the object or by creating a model of it. This changed in the late 19th century as photographers started working in museums and were able to create accurate images of our objects much faster than before.

More than a hundred years later, we are now taking the next step towards democratising museum collections and making them accessible to the public. Using a technique called photogrammetry my team and I have started creating digital 3D models of our objects and our digital team is working on making them accessible via sketchfab and our collections online website.

To enable all our colleagues to take part in this exciting new chapter I rolled out several training sessions which are designed to give my colleagues an idea of the principles behind photogrammetry and how 3D models can be created from individual photographs. While members of Collections Services where especially interested in the documentation and preservation aspect, colleagues from New Media, Learning and the Content Development Team were able to gain a better understanding of how 3D models can be used to tell authentic stories and help the public engage with our collection in new ways.

The training was divided into three sections with the first section asking all attendees to dig out their algebra knowledge and remember how a point in space can be determined by using basic triangulation. For photogrammetry this means that two images of the same features of an object and taken from two different camera positions enable a specialist software to re-create the location of the object’s features in a three-dimensional digital space.

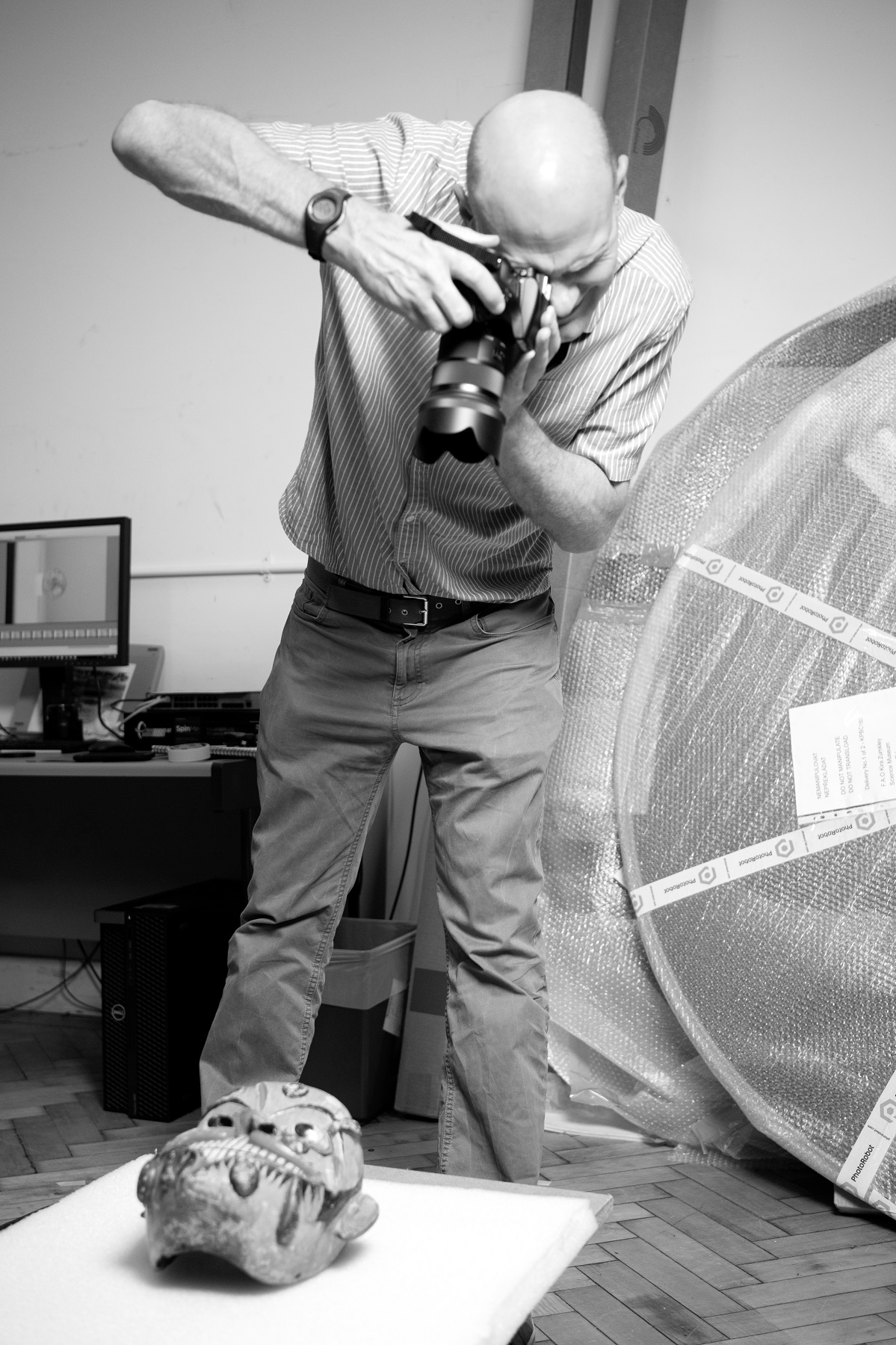

With this principle in mind (and another one or two hours focusing on lighting, white balance, depth of field, calibration sets and all sorts of other fun things) the second part of the day saw my colleagues capturing images of our test object making sure that all images overlapped by at least 60%, both horizontally and vertically, to make sure every object feature was captured in at least nine images. For this we simulated two imaging scenarios. The first one was to capture an object in situ and for the second scenario we used our new turntable equipment and positioning an object in its centre.

Once we finished photographing both objects from all angles we looked at how our images should be processed in Adobe Camera Raw to adjust for white balance, chromatic aberration and vignetting. With all this done we loaded our images into the photogrammetry software, followed a processing workflow designed to improve the RMS reprojection error and created our final 3D model.

It was fantastic to see what this new technology is able to achieve, and I am looking forward to seeing more of our collection items digitised in 3D and made available to the public.

Find out more about what it took to scan a high-resolution 3D model of Stephenson’s Rocket here.